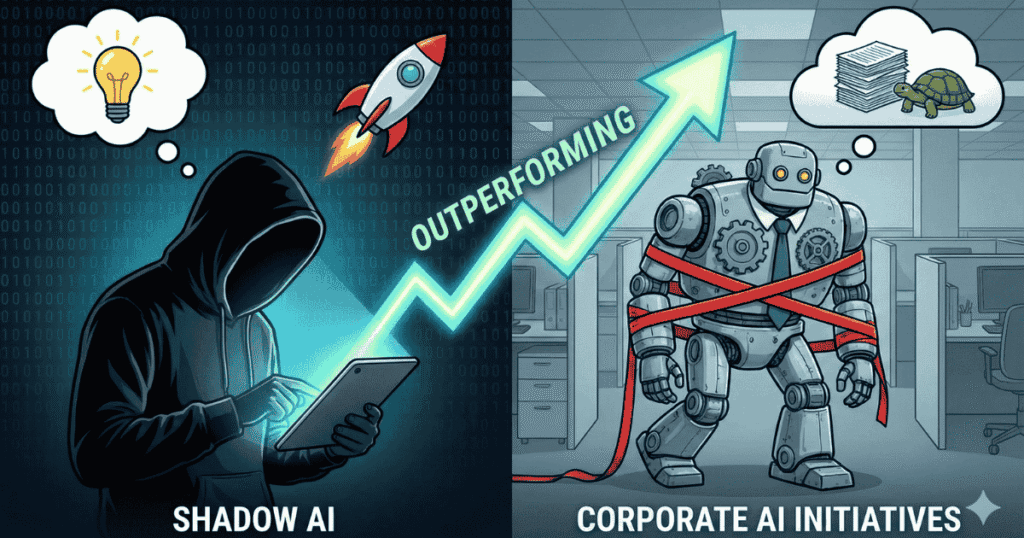

Why Shadow AI Is Outperforming Corporate AI Initiatives

Your employees are using ChatGPT. Right now. For work.

And they’re not telling you.

Not because they’re reckless. Not because they want to break rules.

But because it’s faster, cheaper, and actually helps them get work done.

While many organizations have invested heavily in enterprise AI tools, the reality inside teams looks very different. Employees across functions—legal, marketing, engineering, healthcare—are quietly relying on consumer AI tools to meet deadlines and stay productive.

This gap between policy and reality is where Shadow AI thrives.

Why This Article Exists

I’ve worked closely with teams adopting AI under real deadlines—where productivity pressure collides with security policy. This article reflects patterns I’ve repeatedly seen across startups and large organizations navigating AI adoption in practice, not theory.

The Shadow AI Blind Spot

Most companies believe they understand how AI is used inside their organization.

They don’t.

In practice, the majority of AI interactions happen outside approved systems—often through personal accounts, personal devices, or embedded AI features that bypass traditional monitoring entirely.

Employees aren’t trying to hide anything. They’re simply solving problems faster than corporate processes allow.

And here’s the uncomfortable part:

Consumer AI tools reach real production workflows far more often than sanctioned enterprise tools.

Not because they’re more “powerful.”

But because they’re usable.

The Real Cost Nobody Is Measuring

Companies spend hundreds—sometimes over a thousand dollars per employee each year on AI initiatives. And yet, most enterprise AI programs never make it past pilot stages.

Meanwhile, an analyst paying a small monthly fee for ChatGPT Plus is shipping work faster than a six-figure “AI-powered” system stuck in onboarding.

Shadow AI isn’t just an unapproved expense.

It’s unmeasured productivity.

I’ve seen organizations with “ChatGPT – Approved” listed on dashboards, only to later discover employees were using personal accounts to analyze sensitive data under deadline pressure—creating regulatory risk that monitoring tools never caught.

They had visibility into the tool.

They had no visibility into how it was actually being used.

Why Enterprise AI Keeps Losing

This isn’t rebellion. It’s friction.

1. Speed Beats Policy

Procurement cycles take months.

ChatGPT takes seconds.

When deadlines are real and approved tools are still “in training,” employees don’t wait. They ship.

2. Usability Wins Every Time

Enterprise tools come with manuals, onboarding sessions, and certifications.

Consumer AI comes with a text box.

Professionals know what good AI feels like—because they already use it. When approved tools feel slower or clumsier, they get bypassed.

3. Output Quality Matters

The skepticism around enterprise AI often comes from people who use consumer tools heavily.

They aren’t anti-AI.

They’re anti-bad AI.

Where Shadow AI Goes Wrong

Shadow AI isn’t harmless. And pretending it is creates real risk.

- Data leakage: Sensitive information gets pasted into tools that were never meant to handle it.

- Compliance violations: Employees don’t always know which models are covered by regulatory safeguards—and which aren’t.

- No audit trail: Decisions get generated with no clear record of inputs, outputs, or accountability.

- Operational fragility: Unapproved tools quietly become dependencies in critical workflows.

The real danger isn’t that employees use AI.

It’s that they use it quietly, without anyone responsible for the outcome.

Real-World Wake-Up Calls

Several major organizations learned this the hard way.

At Samsung, employees unintentionally shared proprietary source code with external AI tools, triggering an immediate internal ban and a rush to build private alternatives.

At Amazon, internal teams discovered that AI outputs were reflecting proprietary information, leading to stricter controls around generative AI usage.

What these cases have in common isn’t carelessness—it’s speed.

Employees were trying to work faster than corporate processes allowed.

The Security Theater Problem

Most enterprises have AI policies they can’t enforce.

Dashboards look clean.

Compliance reports look reassuring.

Meanwhile, AI is embedded inside SaaS platforms like collaboration tools, design software, CRMs, and analytics dashboards—often invisible to traditional security monitoring.

Executives think they’re governing AI.

In reality, they’re governing yesterday’s threat model.

Why Banning AI Doesn’t Work

Blocking ChatGPT feels decisive. It also fails—spectacularly.

Just like shadow IT, banning Shadow AI doesn’t eliminate usage. It pushes it underground.

Employees switch to personal devices. Personal networks. Personal accounts.

You lose visibility.

You lose trust.

And you still have the risk.

What Actually Works

Organizations that get this right do a few things differently.

1. They Start With “Why”

Instead of asking how to control AI, they ask why employees are using unauthorized tools in the first place.

The answer is usually simple:

The approved tools don’t solve the real problem.

2. They Build Visibility Before Enforcement

You can’t govern what you can’t see.

Visibility isn’t just “who used ChatGPT.”

It’s understanding what kind of data was shared and for what purpose.

There’s a difference between summarizing public research and pasting confidential financials into a personal AI account.

3. They Provide Tools That Are Actually Better

Approved tools don’t need to be perfect.

They just need to be better than the free alternative.

Consumer AI can’t access internal systems, structured data, or proprietary knowledge safely. Enterprise AI can—if it’s designed well.

That should be the selling point.

4. They Train for Reality, Not Compliance

People don’t need more lectures about what they can’t do.

They need practical demonstrations of how to use approved AI tools to:

- Draft reports faster

- Analyze data more efficiently

- Reduce repetitive work

Hands-on training beats policy decks every time.

5. They Govern Without Suffocating

Effective governance answers:

- What tools are approved—and why?

- What data is safe to share?

- How fast can new use cases be approved?

- Who actually owns AI risk?

When ownership is fragmented, accountability disappears.

The Organizations That Are Winning

They measure outcomes, not licenses.

They offer multiple tools instead of one bloated solution.

They move from pilot to production in weeks—not quarters.

And most importantly, they learn from Shadow AI instead of pretending it doesn’t exist.

Employees are already telling you what works.

The question is whether you’re listening.

The Window Is Closing

AI adoption isn’t a future event. It’s already happening—outside official channels.

Companies that figure this out early will gain productivity advantages measured in years.

Those that don’t will spend the next few quarters explaining why their AI investments delivered zero ROI while their competitors moved faster with fewer tools.

The Uncomfortable Question

How much productivity are you losing by forcing employees to use tools that don’t work as well as the free alternatives they’re already using?

Your employees already know the answer.

They live it every day—while waiting for your approved tool to finish loading.

You can either enable that productivity with visibility and governance.

Or you can keep pretending control exists—while the real work happens in the shadows.

Final note

Shadow AI is outperforming corporate AI because employees optimize for getting work done.

You can either join that optimization.

Or be replaced by it.