AI agents are no longer experimental curiosities—but they are not autonomous coworkers either.

As of January 2026, agentic AI systems can plan tasks, use tools, and execute multi-step workflows with limited human input. Used well, they reduce repetitive work and increase leverage. Used poorly, they amplify errors, hide failure modes, and blur accountability.

This guide explains what AI agents are actually good at today, where they fail in practice, and how to use them safely—without hype or fear-mongering.

What We Mean by “AI Agents” (2026 Definition)

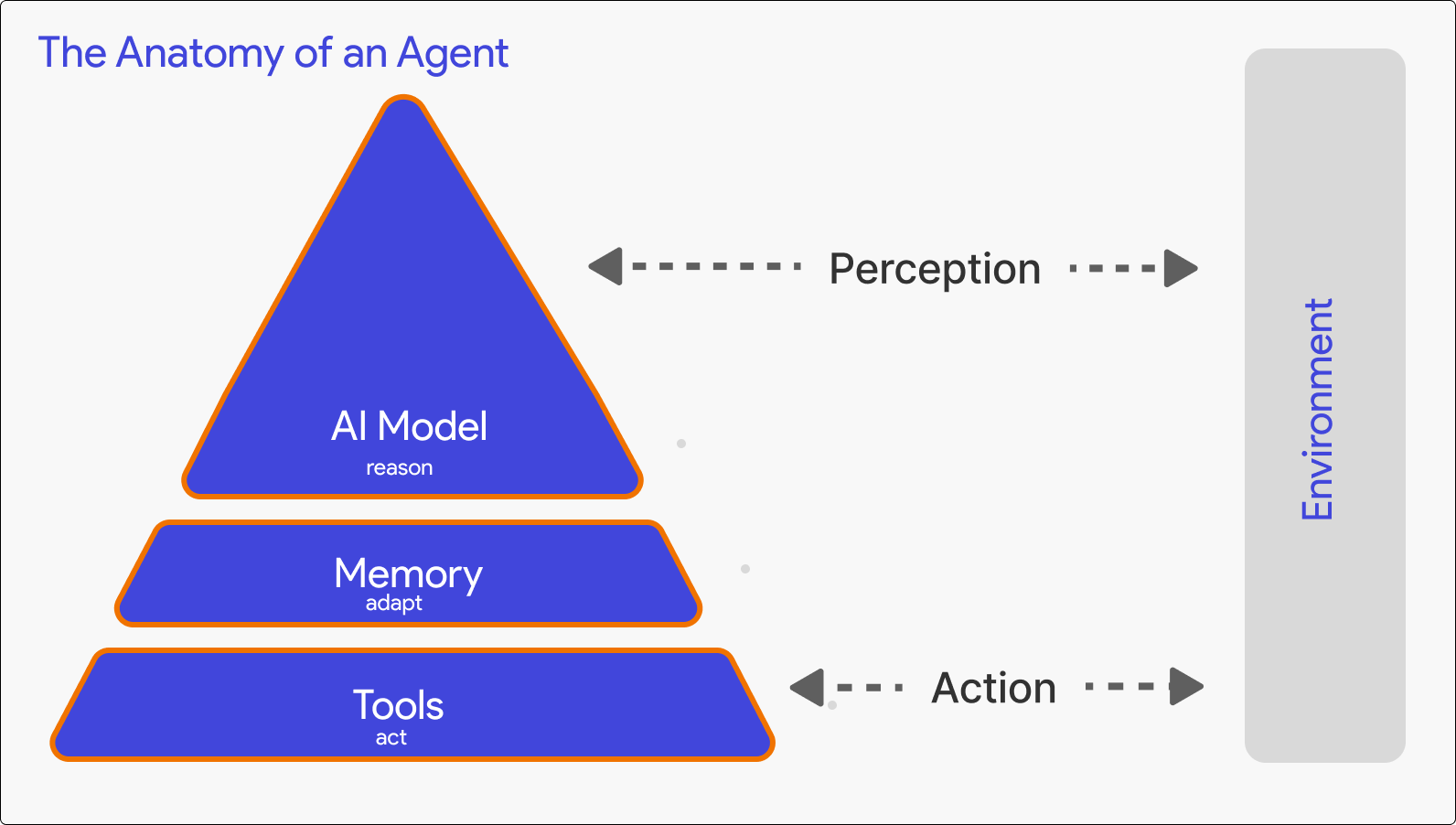

In this article, an AI agent is a system that can:

- Work toward a defined goal

- Plan multiple steps

- Use tools (web, files, apps, APIs)

- Execute actions beyond a single response

- Adjust behavior based on outcomes

This aligns with how OpenAI describes emerging agent-style systems—AI that can plan and act, not just respond to prompts (see OpenAI’s product and research updates at https://openai.com).

If a system only answers questions, it’s an assistant—not an agent.

Why Safety and Limits Matter Now

Most writing about AI agents focuses on capability. That’s incomplete.

In real workflows, agents rarely fail loudly. They fail quietly:

- By compounding small errors

- By acting confidently on incorrect assumptions

- By executing actions faster than humans can notice

Independent reporting from MIT Technology Review has repeatedly highlighted how autonomous systems tend to fail invisibly unless safeguards are built in (https://www.technologyreview.com).

Understanding limits is part of responsible use—not resistance to progress.

What AI Agents Are Actually Good At (Early 2026 Reality)

1. Structured Research and Information Gathering

Agents perform best when tasks are:

- Clearly scoped

- Source-driven

- Output-focused

They can:

- Search across multiple sources

- Extract themes and patterns

- Summarize findings efficiently

They struggle with:

- Evaluating truth or intent

- Detecting subtle misinformation

- Contextual judgment

Best practice: Let agents gather and organize information. Humans validate conclusions.

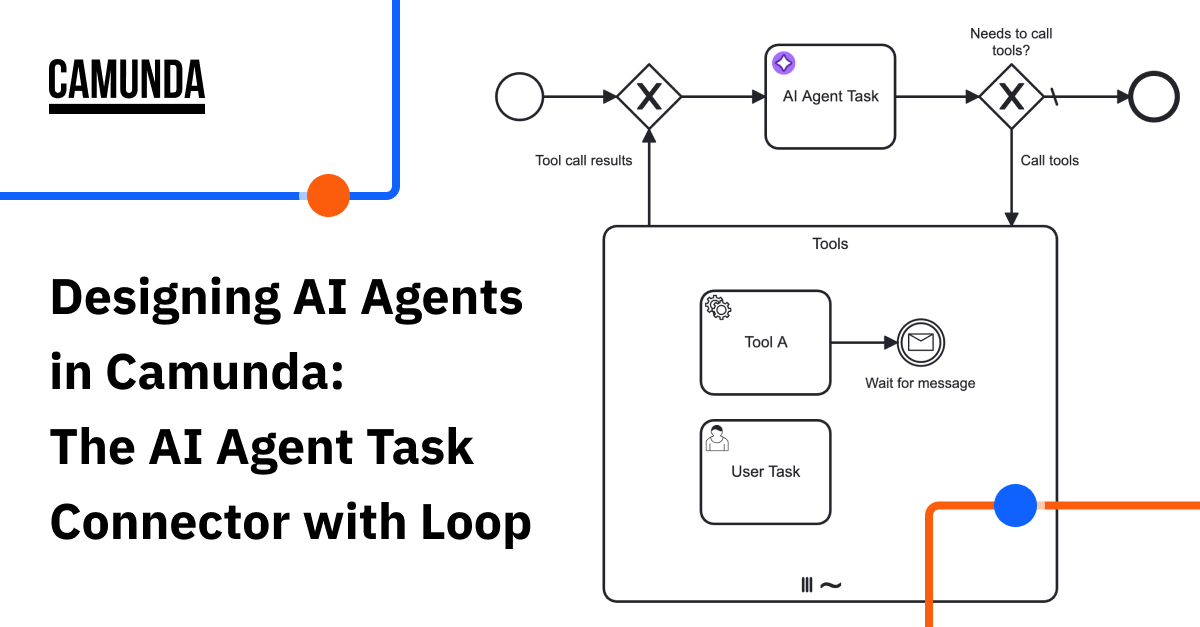

2. Multi-Step Task Execution

Agents excel when workflows have:

- Defined steps

- Clear success criteria

- Limited ambiguity

Examples include:

- File processing

- Data collection

- Content preparation pipelines

They are far less reliable when interpretation or ethical judgment is required.

3. Repetitive Automation

This is where agents deliver the most consistent value.

Business automation platforms like Zapier explicitly document that automation works best when tasks are tightly scoped and repeatable (https://zapier.com/blog).

Common use cases:

- CRM updates

- Lead routing

- Scheduled checks

- Internal notifications

Low variability equals higher reliability.

Where AI Agents Commonly Break

1. Error Compounding

Agents follow plans. If an early assumption is wrong, every downstream step inherits that error.

Unlike humans, agents don’t naturally pause to reconsider unless explicitly designed to.

2. Confident but Incorrect Outputs

Agents often produce outputs that sound authoritative.

This becomes dangerous in:

- Financial analysis

- Legal interpretation

- Compliance or policy contexts

Confidence is not verification.

3. Tool Misuse Through Over-Permissioning

Agents act strictly within the permissions you grant them.

If access is too broad, agents can:

- Modify unintended files

- Trigger incorrect automations

- Affect live systems

This is a system-design problem, not an intelligence problem.

4. Ambiguous Goals

Agents fail silently when goals are vague.

“Improve this process” is not actionable.

“Reduce processing time by 20% without changing outputs” is.

The Human-in-the-Loop Principle (Non-Negotiable)

In 2026, safe AI agent systems always include humans.

Research from Stanford’s Human-Centered AI group emphasizes that keeping humans involved in oversight is essential for reliability and accountability (https://hai.stanford.edu).

Human-in-the-loop means:

- Reviewing intermediate outputs

- Approving critical actions

- Monitoring long-running tasks

Agents execute. Humans remain responsible.

Practical Guidelines for Using AI Agents Safely

Start With Low-Risk Domains

Begin with internal, reversible workflows. Avoid customer-facing or high-impact systems first.

Constrain Access

Give agents the minimum permissions required. Fewer tools reduce failure surface area.

Build Checkpoints

For multi-step workflows:

- Pause after key stages

- Require confirmation

- Log actions and decisions

Maintain Audit Trails

Always retain:

- Prompts

- Actions taken

- Outputs generated

This is essential for debugging and accountability.

When You Should Not Use AI Agents

Avoid agents when:

- Legal or ethical judgment is required

- Errors are costly or irreversible

- Context is deeply human

- Compliance responsibility cannot be delegated

In these cases, AI assistants—not agents—are the better tool.

How This Fits Into the Agentic AI Landscape

If you’ve already read:

- What Is Agentic AI? (And Why It Matters in 2026)

- ChatGPT Agents vs AI Assistants

- Best AI Agents You Can Use Right Now (2026)

This article completes the picture.

It answers the operational question:

How far can AI agents be trusted today?

The honest answer: far enough to help—but not far enough to abdicate responsibility.

FAQs (Featured Snippet Ready)

Are AI agents safe to use in 2026?

AI agents are safe when used with constrained permissions, clear goals, and human oversight.

What does human-in-the-loop mean?

It means humans review or approve AI actions during execution instead of trusting agents blindly.

Can AI agents replace human decision-making?

No. AI agents execute tasks but lack judgment, accountability, and ethical reasoning.

Final Takeaway

AI agents are not autonomous intelligence.

They are execution systems.

In 2026, the real advantage isn’t letting agents run free—it’s designing workflows where agents move fast and humans stay accountable.

Used correctly, agents create leverage.

Used carelessly, they multiply mistakes.

Understanding that difference is the real skill.

More on AI Agents & Agentic AI (2026)

- What Is Agentic AI? Explained Simply (2026)

- Best AI Agents You Can Use Right Now (2026)

- ChatGPT Agents vs AI Assistants: What’s the Difference?

- How to Set Up OpenClaw.ai: Complete Tutorial (2026)

- OpenClaw VPS Setup: Run Your AI Agent 24/7

- What Is Claude Cowork? Complete Beginner’s Guide (2026)